When your platform starts flagging churn, predicting deal closures, and building automation sequences before you ask, the question isn’t whether the AI works. It’s whether the humans behind it are ready.

You turned on ActiveCampaign’s AI features because the promise was compelling: less manual work, smarter campaigns, better results without adding headcount. What most marketing teams discover six months later is that the AI didn’t reduce the workload – it raised the bar for what the humans directing it need to know.

In March 2026, ActiveCampaign stood on a keynote stage and made a claim most software companies avoid because it’s too easy to disprove: they were “First to Launch AI that Acts, Not Just Answers.”

Not generates. Not suggests. Acts.

That single sentence describes a categorical shift in what marketing software actually is. For the past decade, marketing automation meant a human builds a workflow and the system executes it. You were always the one who initiated. The platform waited.

Active Intelligence doesn’t wait.

It monitors your performance signals continuously. It identifies behavioural patterns across your contact database. It surfaces recommendations before you open the dashboard. A contact group quietly moving toward churn, a lead nurture sequence haemorrhaging 20% of prospects at step three, a campaign underperforming against industry benchmarks it benchmarked itself against — the system flags all of this without being asked. Then, in its conversational workspace, it offers to fix it.

This is genuinely impressive technology. The ROI numbers ActiveCampaign publishes from 450+ customers are real: 13 hours per week saved per marketing team, a 12% average conversion rate for AI-driven campaigns compared to 6% for non-optimised ones, an average saving of $4,739 per month for daily AI users.

The mainstream narrative stops there and calls this pure progress.

It isn’t.

What ActiveCampaign built is a system that is, in many ways, smarter than the average marketing team operating it. And that creates a gap — between the sophistication of the AI layer and the readiness of the human layer that’s supposed to direct it. When the machine outpaces the team, three predictable failures emerge: the marketer’s role collapses without a strategic replacement, the data feeding the AI is too broken to trust its outputs, and organisations mistake automation activity for actual business results.

This post is about that gap, why it exists, and what it takes to close it.

What ActiveCampaign’s Autonomous Workspace Does and Why It’s Different From Any Marketing Tool Before It

Before the critique, the context. If you’re evaluating ActiveCampaign or already using it without fully understanding what changed in the last 18 months, here’s what you’re actually working with.

Active Intelligence is not a feature. It’s the intelligence engine that runs across the entire platform. ActiveCampaign’s own description calls it a move beyond “prompt-and-respond” systems (where a human initiates every action) to what they call “agent-to-user” AI, where specialised AI agents continuously analyse data and bring insights to you.

In practice, this means a set of coordinated capabilities that each handle a different part of the customer journey.

Predictive Sending analyses each individual contact’s last 100 interaction points to determine their personal optimal delivery time. Not a segment average, not a best-practice send window, the specific moment this person is most likely to open an email. The system places emails in a 24-hour holding period after they’re queued, then delivers them at the predicted optimal moment. To prevent the model from becoming fixed in its assumptions, 10% of emails are sent at randomised times to discover new engagement patterns, while 90% go at the predicted time.

Predictive Content uses natural language processing to serve different content variants to different contacts within the same campaign. A marketer writes up to five distinct versions of a content block (varying length, tone, product focus, level of detail) and the AI determines which version to show each contact based on their historical messaging preferences. The system updates daily, tracking which persona groups responded to which styles.

Win Probability applies machine learning to every deal in the CRM pipeline, assigning a percentage likelihood of closure. It pulls from four data categories: email engagement history, contact attributes (job title, lead source, email domain), deal context (pipeline stage, time spent in each stage), and site behaviour tracked via ActiveCampaign’s site tracking. The result is meant to function as a “salesperson working 24/7”: a continuously updated signal telling your sales team where to focus.

The Active Intelligence Workspace, launched in 2025, ties all of this together in a conversational interface. A marketing leader can type: “Design a 4-email lead nurturing automation including industry insights, success stories, objection handling, and a clear offer with urgency.” The system generates the entire flow (copy, structure, sequencing) optimised for the business’s specific industry and the brand voice parameters it’s been given.

This is not a content assistant. It’s an autonomous execution layer.

77% of ActiveCampaign users have already adopted Active Intelligence features. These results align with broader market data: AI-driven personalisation across the industry has demonstrated up to 35% increases in purchase frequency, which makes ActiveCampaign’s platform-specific conversion figures credible rather than exceptional. The platform’s Spring 2026 Keynote announced its first autonomous marketing connector for Anthropic’s Claude, deepening the reasoning and content generation capabilities further. The direction of travel is clear — more autonomy, less human initiation required.

Which brings us to the part that doesn’t make the keynote slides.

What ActiveCampaign’s Autonomous Workspace Does to the Technical Marketer Role

The single most important thing to understand about Active Intelligence is that it doesn’t reduce the requirement for human judgment – it relocates it.

For a decade, the most valued person on a marketing automation team was whoever could architect complex conditional logic. The “if/then tree builder” – the one who could map a lead nurture sequence across 12 branches, configure lead scoring rules across 40 contact fields, and translate a sales process into CRM workflow without breaking either system.

These people were genuinely hard to hire. They sat at the intersection of marketing strategy, CRM administration, and systems thinking. When they left, they were expensive to replace.

The Active Intelligence Workspace just made their core technical skill a prompt.

Type your campaign requirements in plain language and receive a complete, branched automation sequence in return. The technical execution that once required days of careful architecture now takes seconds. This isn’t a marginal efficiency improvement — it’s the elimination of an entire skill category as a competitive differentiator.

ActiveCampaign themselves frame this shift explicitly: success in the Active Intelligence era is determined by the ability to “train” the AI on the business’s unique priorities and brand voice, not by the ability to manually build the workflows. They call it a move from “doer” to “orchestrator.”

The problem is that orchestrator thinking is not the default. It’s a categorically different skill set, and most marketing teams haven’t been retrained to develop it.

What does the orchestrator role actually require? It requires being able to define brand voice and strategic priorities before the AI starts generating – not as an afterthought, but as the first step. Every AI-generated subject line, every automated sequence, every Predictive Content variant the system creates will reflect whatever context it’s been given. If that context is thin or generic, the outputs will be thin and generic, regardless of the technical sophistication of the model producing them.

It requires recognising when AI-generated content is technically correct but strategically wrong. Picture this: the AI generates a perfectly structured urgency-based email to a prospect — the copy is clean, the CTA is clear, the send time is optimised. What the AI doesn’t know is that this prospect flagged budget concerns in a sales call two days ago. A strategist who knows that conversation rewrites the email entirely. A team operating purely on AI outputs sends the urgency email and wonders why the prospect goes cold.

It requires treating AI output as a high-quality first draft, not a finished product. The AI Campaign Builder generates quickly. A senior strategist’s job is to spend the time saved on finishing — adjusting the contextual details, refining the brand-specific language, catching the sequencing logic that pattern-matching across training data can’t replicate from knowing the actual customer.

And it requires understanding the governance layer that most organisations have skipped entirely. Research consistently shows that 61% of organisations lack formal AI usage policies, and 70% of marketers lack generative AI training. The same tools that are genuinely reducing operational costs and improving anomaly detection are being deployed into teams that haven’t been structured to supervise them. The result is organisations that are technically using autonomous AI while functionally still operating like manual shops — just producing more output per hour.

This matters beyond internal productivity. When AI generates the volume and humans disengage from the quality layer, the market notices. B2B vendor credibility has declined from 67% in 2022 to just 46% in 2025, while 90% of buyers now actively verify vendor content before acting on it. The buyers who matter most — the decision-makers with budget authority — are becoming more sceptical of content that feels produced rather than considered.

This means the human finishing layer isn’t just a quality-assurance step. It’s a trust-building mechanism. When 80% of B2B buyers accept AI-generated content conditionally, the condition is that it demonstrates genuine understanding of their situation. That understanding doesn’t come from a model. It comes from a strategist who knows the customer, and who treated the AI’s draft as a starting point rather than a conclusion.

The shift from doer to orchestrator sounds like a promotion. For the teams that make it genuinely, it is. For the teams that adopt the terminology without changing the underlying way they work, the AI will produce more content with less strategic direction — and the results will reflect that.

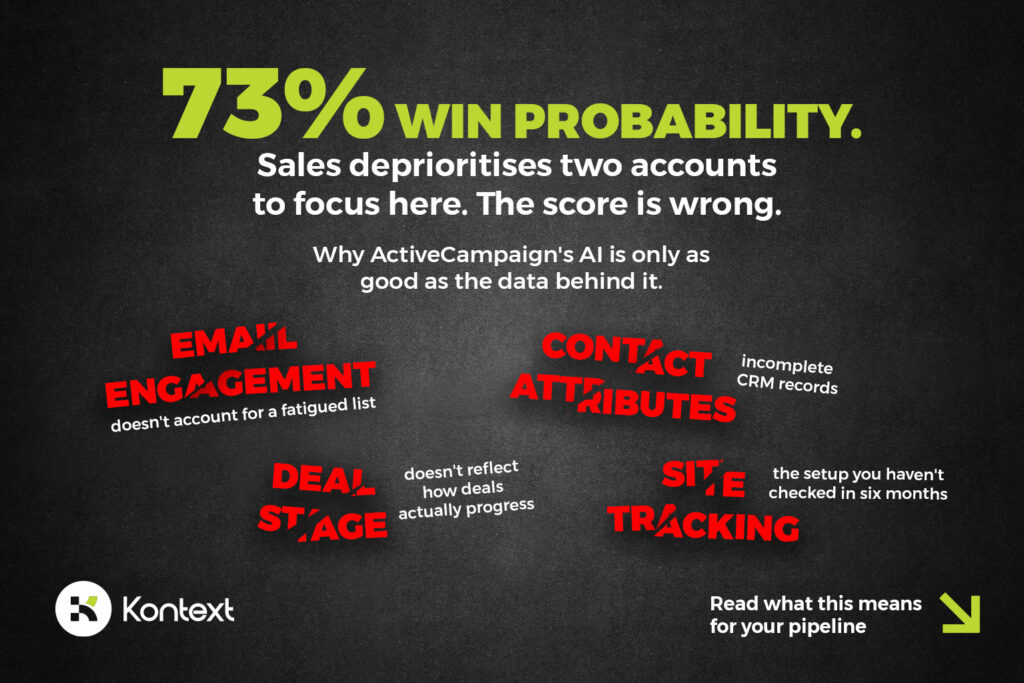

Why ActiveCampaign’s Win Probability Scores Break on Bad CRM Data

The Win Probability feature is where the gap between AI sophistication and organisational readiness becomes most expensive — and most invisible.

The promise is compelling. Every deal in your CRM pipeline receives a continuously updated percentage likelihood of closure. Sales teams can see at a glance where to focus their energy. Managers can forecast with greater accuracy. The AI does the analysis that would otherwise require a dedicated data science function — the kind of predictive modelling that used to be exclusive to enterprise organisations with six-figure analytics budgets.

But Win Probability is only as reliable as the four data categories it draws from. And each of those categories has a structural vulnerability that most mid-market businesses haven’t addressed.

Email engagement data tells the AI how a prospect has interacted with your communications. Opens, clicks, replies — frequency and recency. The problem is that this signal is only meaningful if your email deliverability is strong, your list segmentation reflects actual buyer behaviour, and your contacts haven’t been over-emailed into a state of disengagement that looks like disinterest but is actually a response to contact frequency. If your open rates are suppressed by deliverability issues or fatigued sending patterns, the AI reads a disengaged contact where a genuinely interested prospect exists.

Contact attributes — job title, lead source, email domain — depend on clean, consistently maintained CRM records. Here’s the practical reality: salespeople spend approximately 20% of their time on CRM administration, data entry, and reporting. That’s time squeezed from selling. Under pressure, data entry quality drops. Job titles get left blank, lead sources get misattributed, email domains get entered inconsistently. The AI has no way to distinguish a carefully maintained record from a hastily completed one. It treats both with equal confidence.

Deal context — pipeline stage and time spent in each stage — only produces meaningful signal if pipeline stages are clearly defined and consistently applied across the sales team. Research on sales and marketing alignment shows that 79% of marketing leads never convert to sales, partly because the qualification criteria between the two functions aren’t actually aligned. If your pipeline stages reflect how the CRM was originally configured rather than how deals actually progress, the AI is learning from a map that doesn’t match the territory.

Site behaviour — pricing page visits, case study views, product page interactions — requires site tracking to be properly configured and maintained. Many mid-market businesses set up site tracking once and haven’t reviewed it since. Tag implementations drift. Pages get redesigned and tracking breaks. The AI sees a contact who hasn’t visited the pricing page as a less-engaged prospect — when in reality, the tracking stopped working six months ago.

Now consider what happens when all four of these data quality issues exist simultaneously — which is not unusual. Picture a specific deal sitting at 73% Win Probability. The sales director deprioritises follow-up on two other accounts to focus effort here. The score looks authoritative. What it doesn’t reflect: the contact’s job title was entered generically as “Manager,” site tracking broke three months ago when the pricing page was redesigned, and the deal stage was moved forward by a rep who confused “demo scheduled” with “proposal reviewed.” The AI learned from all of this. The score it produced is confident and wrong. The two deprioritised accounts — with cleaner data, lower scores — were the real opportunities.

This is what we mean by the bottleneck paradox. AI amplifies what’s already in the system. If the system contains dysfunction, the AI scales it up and presents it with algorithmic confidence. A manual sales process with poor CRM hygiene produces wrong priorities that are obvious to anyone paying attention. An AI-powered sales process with poor CRM hygiene produces wrong priorities that look like data-driven decisions.

The same logic applies across the entire Active Intelligence stack. Predictive Sending delivers meaningful uplift when the contact database is clean enough for pattern recognition to work — but if your list contains thousands of disengaged contacts mixed with genuinely active prospects, the model learns from noise. Predictive Content improves click-through rates when someone has actually crafted meaningfully different variants — but if your five “variants” are minor rewrites of the same email with different subject lines, the AI is optimising a distinction that doesn’t exist. AI-Suggested Segments create useful audience groups when the underlying behavioural data is rich — but if your contact records are sparse, the segments will be too.

The technology layer is sophisticated. The data foundation question is not a technology question. It’s a human and organisational one, and it has to be answered before the AI is trusted to act on it.

Notably, ActiveCampaign’s own acquisition of “Feedback Intelligence” — an AI evaluation platform — signals they understand what comes next: closing the loop between AI outputs and quality validation. That closed loop still requires humans who know what good looks like and can train the system accordingly. The acquisition confirms the argument, it doesn’t resolve it.

Companies with aligned sales and marketing teams are nearly three times more likely to beat their new customer targets. That alignment improves deal closure efficiency by 67% and reduces customer acquisition costs by 30%. Win Probability is designed to accelerate aligned teams. Deployed into misaligned ones, it accelerates the misalignment.

How to Build the Human Layer That Makes Active Intelligence Actually Work

The question isn’t whether to use Active Intelligence. It’s whether the conditions are in place for it to produce results rather than amplify existing problems.

None of the issues above are unsolvable. But they have to be addressed in the right order.

Start with the data foundation, not the AI features.

Before activating Win Probability, audit the CRM. Not a quick review — a real audit. Are pipeline stages clearly defined and consistently applied? Do they reflect how deals actually progress, or how the system was originally set up? Are contact records complete enough to provide meaningful signal to a machine learning model? Is site tracking configured, tested, and maintained?

Before running Predictive Content, assess whether the variants you’re creating are genuinely differentiated. Different length, different tone, different angle — not the same email with a different opening sentence. The AI can only optimise the choices it’s given.

A practical way to think about the investment split: the technology itself accounts for roughly 30% of what determines results. The change management and process alignment required to use it well accounts for 40%. The data foundation that determines what the AI actually has to work with accounts for the remaining 30%. Most businesses invert this — spending the majority of their effort on tool configuration and almost nothing on the other two categories. That’s why the results don’t match the brochure.

Define brand voice and strategic priorities before the AI generates anything.

ActiveCampaign’s own guidance recommends using the AI Behaviour Customisation feature to set brand voice and strategic preferences once, platform-wide. This is the orchestrator’s first and most important job — not monitoring outputs, but creating the context the AI operates within.

A team that does this carefully gets AI-generated content that sounds like them, reflects their actual positioning, and serves their specific customer relationships. A team that skips this gets outputs that are technically competent and strategically generic — the marketing equivalent of a suit that fits every body and flatters none.

This is where what we call the Cyborg Method applies in practice: AI handles execution speed, volume, and pattern recognition; humans handle strategic context, brand integrity, and the judgment calls the AI can’t make. It’s not a philosophical position — it’s an operational requirement. The human input isn’t oversight of the AI. It’s the strategic direction that makes the AI’s output worth anything.

Establish what “good” looks like before deploying AI at scale.

AI generates volume. The question is whether volume serves you. If a marketing leader can’t articulate what a high-quality lead nurture sequence looks like for their specific business — what journey stage each email should address, what objection each touchpoint should handle, what action the sequence should move the prospect toward — then more automation just means more emails going to more people with less strategic direction.

High-performing organisations implement editorial guidelines, brand voice documentation, and quality assurance checkpoints — not to slow the AI down, but to ensure what it produces is actually usable. Research consistently shows that hybrid human-AI approaches outperform both pure-AI and pure-human content across quality, efficiency, and audience engagement. The hybrid works because humans set the standard and the AI produces to it, not the other way around.

Build the sales-marketing data loop deliberately.

Win Probability becomes genuinely useful when the CRM reflects real sales activity, pipeline stages mean the same thing to everyone who uses them, and site tracking is complete enough to tell the AI what prospects are actually doing between sales conversations. This requires a shared definition of what a qualified lead looks like, agreed pipeline stage criteria, and a data maintenance habit that doesn’t erode under sales pressure.

None of this is technically complex. All of it requires alignment — between marketing and sales on qualification criteria, between platform administrators and end users on how to use the CRM, between the business’s actual sales process and how that process is represented in the system.

The businesses that get this right find that Win Probability is genuinely useful. The businesses that skip it find that their salespeople stop trusting the score after a few weeks of acting on it and discovering it doesn’t reflect reality.

The Question Worth Asking Before You Activate the Next Feature

ActiveCampaign’s Active Intelligence is the most advanced realisation of autonomous marketing currently available. Its ability to move from “answers” to “actions” is a real technological achievement, and the direction of travel — toward greater autonomy, deeper reasoning, more proactive intervention — is not going to reverse.

The businesses that will see the 12% conversion rates, the 13 hours per week reclaimed, and the Win Probability scores they can actually trust are the ones that treated the human layer with the same seriousness as the technology layer.

They defined their brand voice before the AI started generating. They cleaned their CRM before activating predictive scoring. They trained their team to be orchestrators, not operators. They understood that an autonomous marketing platform amplifies what’s already in the organisation — and they made sure what was already there was worth amplifying.

Before the next feature activation, the questions worth asking are these: Is your CRM data clean enough to trust what the AI learns from it? Do your pipeline stages reflect how deals actually progress? Have you defined your brand voice precisely enough for AI-generated content to reflect it consistently? Does your team understand the difference between approving AI output and strategically directing it? Is your site tracking actually working?

If the answer to most of those is uncertain, that’s not a technology problem. It’s the starting point for getting the technology to work.

Not sure whether your data foundation, team structure, or AI governance is ready for an autonomous marketing platform?

That’s exactly what Kontext audits. Our AI-Augmented Marketing Audit covers five specific areas in two hours of your team’s time: CRM data quality, pipeline stage alignment, brand voice definition, AI governance readiness, and site tracking integrity. You receive a written report with prioritised recommendations — not a sales deck.

If Active Intelligence is already running, the audit tells you what to fix before the AI scales the problem. If you’re evaluating it, the audit tells you what to build before you switch it on.

[Book your audit →]